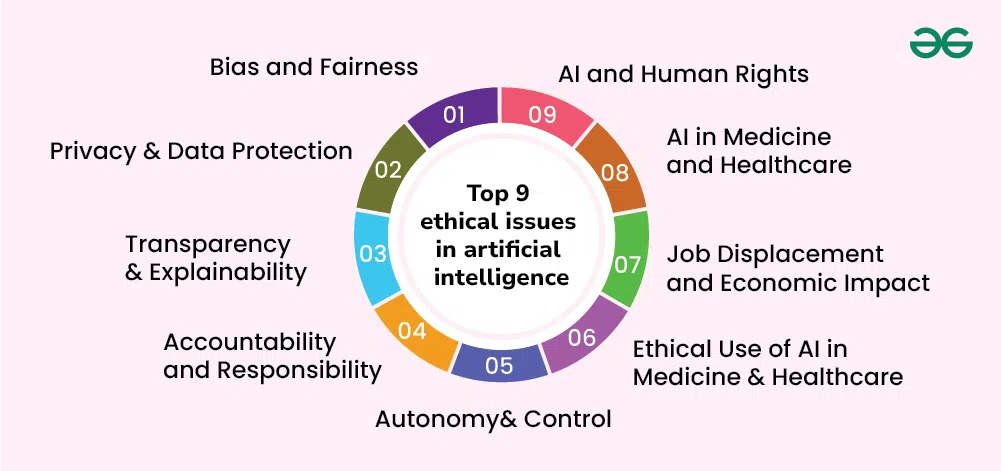

Introduction: Artificial intelligence (AI) is revolutionizing sectors-health care, finance, recruitment and policing. Yet, with the increase in power, the most burning question is, can we trust the AI system not to be unfair? The presence of bias in AI leads to discriminating outcomes of an algorithm, which is usually based on human biases in data. Unethical AI may perpetuate inequality in facial recognition programs leading to racial discrimination as well as hires whereby the level of gender equality is lower.

In this article we shall discuss:

What is the cause of AI bias? The presence of biased algorithms in real life. Plans on how to create more just AI systems. Ethical AI of tomorrow. You will find out at the end whether we can actually develop fair AI, and what happens before we do it there.

What is AI Bias?

AI bias is the bias or unfairness that occurs when the machine learning models form inaccurate results due to improper training data or design decisions. Instead of being human-related, as the bias of human beings is, AI bias is systematic in its nature, so it can spread discrimination quickly.

Kinds of AI Bias

Data Bias-This is when some of the sets in training data tend to have bigger proportions than others. Hiring AI that was trained primarily on resumes of males might favor those who are male.

Algorithmic Bias- when the model reproduces biases, on its own. An example is predictive policing tools that categorize minority neighborhoods as high crime because minority neighborhoods were victim to past arrests.

User Bias-When human intervention enables discrimination to be supported. Case: The social media algorithms that encourage divisive content due to its greater popularity.

What Real-World AI Bias looks like

1. Facial recognition and Racial Bias Issue: It has been observed that faces with darker complexions take more error rates in recognition by facial recognition systems.

Case: In 2018, Amazon Recognition misidentified 28 members of Congress as a criminal in a mugshot-people of color disparately.

Implication: Misidentification can result in erroneous arrests or police spying.

2. Gender Discriminatory Employment Instruments Problem: In 2018, Amazon abandoned an AI recruiting system after it de-ranked resumes with such words as “women’s “(e.g., “women’s chess club”).

Root Cause: The AI was trained using historical hiring meaning that it favored male applicants on tech positions.

3. Healthcare Artificial Intelligence prejudice Problem: White patients in the US hospitals were given extra care over the Black patients using an algorithm developed in the hospital.

Why? It treated healthcare spending history as a proxy of need by disregarding the existing system Differences in care access.

Is it possible to construct Ethical Algorithms?

Yes, but with active engagement in the collection of data, design of models and regulation. Here’s how:

1. Training Data Diversity Make sure that the items on the sets reflect diverse population.

Example: The Diversity in Faces project of IBM has led to increasing the fairness of facial recognition by exposing it to more diverse colours of skin.

2. Bias Detection Tools The use of such tools as AI Fairness 360 by IBM and What-If Tool by Google assists developers in bias audits of the models.

3. Introduce what is known as the explainable AI (XAI) In case an AI refuses to provide a loan, it must justify this decision and not present it in black backpack form.

4. Human Oversight Teams & Ethical AI Teams It operates ethics committees in the company such as Microsoft and Google to assess risky AI applications.

5. Regulatory Frameworks The US Algorithmic Accountability Act and the EU AI Act are to assure fairness in AI systems.

Ethical AI in Future

Creating ethical AI is not only a technical issue, but a social one too. The next step is here:

1. More Transparency

The AI companies should indicate their model functionality and what data they collect.

2. Continuous Auditing

The bias of AI systems should be checked regularly even post deployment.

3. Public/private Partnership

The governments, technology companies, and activists have to cooperate to establish ethical standards of AI.

4. Social good AI

There is a possibility of reducing inequality in fair AI, provided that they are created responsibly.

Conclusion: The Way to Just AI

A growing problem with AI is bias, which is not insoluble. With these measures of enhanced data diversity, enforcement of transparency, and a powerful regulation policy, we will enable an algorithm to be fair, ethical, and helpful to everyone. Our current decision will also determine the future of AI. Can we accept prejudicial systems to expand gaps in society and only require equity in the era of automation?

What is your opinion? Is AI something that can be entirely unbiased? Write about your ideas in the comments!

For more interesting information, visit to our site.